Batch module, Executes Definition sets automatically and at scheduled times

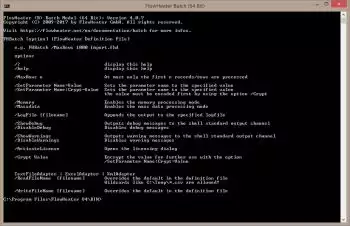

The FlowHeater utility "FHBatch.exe" is a shell application that is controlled by command line parameters and/or control file parameters. You can execute any stored FlowHeater Definition with the batch module, without needing to use the Designer graphical interface to run it. For example, after having tested and saved a Definition set using Designer you can setup everything to run on a Windows Server with scheduled Windows tasks.

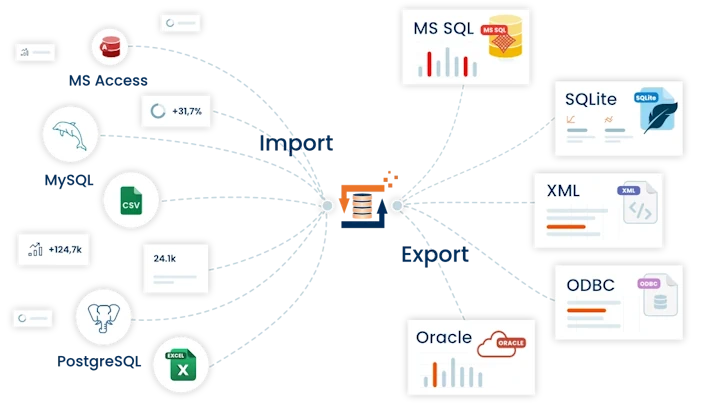

The FlowHeater utility "FHBatch.exe" is a shell application that is controlled by command line parameters and/or control file parameters. You can execute any stored FlowHeater Definition with the batch module, without needing to use the Designer graphical interface to run it. For example, after having tested and saved a Definition set using Designer you can setup everything to run on a Windows Server with scheduled Windows tasks.The batch module is ideal for a Definition conversion process that takes a long time to run or for frequently repeated tasks. Examples of these are the Import / Export / Update of large databases, which can be run as an overnight batch job, and the automatic creation of a testing data during a development project.

Example command line

FHBatch.exe /MaxRows 1000 C:\Temp\Import\Turnover.fhd

These parameters instruct FlowHeater to run the Definition set ImportTurnover.fhd but only process the first 1000 rows.

Note: If authorization is required to enable connection to a database ( SQL Server, Oracle, MySQL, etc.) for example, the necessary database, username and password details must be stored in the Definition set. For security reasons, all passwords saved in FlowHeater Definition sets are encrypted.

Return Code / Exit Code / Error Level

After running the Batch Module you can test the exit code in batch files (.CMD / .BAT) to find out whether running the started FlowHeater Definition was completed successfully. Code values are

0 = The run completed successfully.

4 = The run ended with warnings.

8 = The run ended with an error message.

12 = The run was aborted with a fatal error. If the Definition that failed used a database Adapter then a rollback of the current transaction will have been performed automatically.

e.g.

@echo off

FHBatch.exe /MaxRows 10 Your-Import-Export-Definition.fhd

if %ERRORLEVEL% EQU 0 goto success

if %ERRORLEVEL% EQU 4 goto warnings

if %ERRORLEVEL% EQU 8 goto errors

if %ERRORLEVEL% EQU 12 goto abort

echo exit code not defined

goto end

REM Successfully Execution

:success

echo Execution completed successfully

goto end

REM Handling of warnings

:warnings

echo Execution ended with warnings

goto end

REM Handling of errors

:errors

echo Execution terminated with error messages

goto end

REM Handling of crashes

:abort

echo Execution aborted

goto end

REM end of script

:end

pause

Options

| Option | Description | |

|---|---|---|

| /? or /help |

Outputs a help description |

|

| /Silent |

Suppresses all output on the console |

|

| /MaxRows n |

At most only the first n records/rows are processed |

|

| /SetParameter Name=Value |

Sets the Parameter Name to the value entered. |

|

| /SetParameter Name:Crypt=Value | Sets the Parameter Name to the value entered. The content of this value must previously have been encrypted with the option /Crypt |

|

| /ParameterFile filename | Parameters are assigned from the specified UTF-8 file. For details on the structure of this file see below. |

|

| /Memory | Enables the memory processing mode |

|

| /Massdata | Enables the mass data processing mode |

|

| /ExecuteSteps "n, from-to" | Only the processing steps specified here are executed. Any number of steps or ranges can be specified, separated by commas. |

|

| /LogFile filespec | Adds the result to the specified log file |

|

| /LogCompact | This produces a single-line (compact) format for output |

|

| /LogQuote | Encloses individual log entries in quotation marks |

|

| /LogDelete | Deletes the log filename given prior to execution |

|

| /ShowDebug | Outputs debug messages to the shell standard output channel |

|

| /DisableDebug | Disables debug messages |

|

| /ShowWarnings | Outputs warning messages to the shell standard output channel |

|

| /DisableWarnings | Disables warning messages |

|

| /Crypt Value | Encrypts the value for subsequent use with the option /SetParameter Name:Crypt=Value |

Options only for TextFile, Excel and XML Adapter

Using the /ReadFileName and /WriteFileName options in the TextFile, Excel and XML Adapters you can define which file should be read or written. If a different Adapter is present on the respective side, attempting to use this option will abort the run with an error message.

| Option | Description | |

|---|---|---|

| /ReadFileName filespec | overwrites the filename field in the Definition file. The default setting is to define the filename for the first processing step and the first Adapter. Using the format /ReadFileName[:S[:A]] allows you to define other Adapters and processing steps. e.g. /ReadFileName:2:1 will set the READ side filename in the second processing step for the first Adapter. If the Adapter part [:A] is omitted then the first Adapter is automatically assumed for the processing step specified. wildcards like C:\Temp\*.csv are allowed! |

|

| /WriteFileName filespec | overwrites the filename field in the Definition file. The default setting is to define the filename for the last processing step and the first Adapter. Using the format /ReadFileName[:S[:A]] allows you to define other Adapters and processing steps. e.g. /WriteFileName:2:1 will set the WRITE side filename in the second processing step for the first Adapter. If the Adapter part [:A] is omitted then the first Adapter is automatically assumed for the processing step specified. |

Note: The /ReadFileName option always sets the filename of the first Adapter in the first processing step and the /WriteFileName option sets the output filename of the first Adapter in the last processing step. If it is necessary to define alternative filenames for batch processing this can be achieved using a FlowHeater Parameter, e.g. using an option like “/SetParameter FILE2=C:\Temp\export.csv”.

As of version 2.x all the options you selected using Designer in the Test and Execute popup are saved within the FlowHeater Definition. You only need the options detailed above when you wish to change the predefined options during batch execution.

e.g. You have a very large volume of data you wish to import/export. While you are setting up the Definition and testing it in the Designer, you select the option to process a maximum of 1000 records. This setting is nevertheless stored in the Definition file. If you now try to run this Definition using the Batch Module without making further changes, only the first 1000 records will be processed. So that the Batch Module processes the complete number of records available, you need to override the option /MaxRows with the value 0 in the task parameters. See example.

FHBatch.exe /MaxRows 0 import.fhd

This will process all available records/rows, regardless of the predefined setting in the Definition file.

Structure of the UTF-8 Parameter file for option /ParameterFile

The UTF-8 file specified by this option can be used to pass any number of FlowHeater Parameters to the Batch Module, which are then assigned before execution. To ensure special characters, such as symbols and umlauts , are processed properly, the file must be encoded as UTF-8 (Codepage 65001). One Parameter can be set per line e.g. "Parameter name=value". Blank lines are skipped and the text on lines beginning with the "#" character are ignored. These can be used to insert comments and notes into the file.

An example Parameter file:

# Sets Parameter "P1" to value "123"

P1=123

# Sets Parameter "P2" to value "123" with surrounding spaces removed!

P2 = 123

# If embedded spaces are required, this can be achieved as follows:

# Sets Parameter "P3" to value " 123 " white space within quotes is retained

P3=" 123 "

# It is also possible to assign values containing the equals sign

# Sets Parameter "P4" to value "123=123"

P4=123=123

# If quote marks are also required, these can be assigned as follows

P5=""123""

# Of course, encrypted Parameter values can also be assigned:

P6:Crypt =f+BdILS19FM##

Examples of usage

The batch module makes it is easy to export data on a regular basis, for example, every night you could export the day’s turnover figures into a CSV text file automatically. Further potential uses include taking flat files or test data, formatted with fixed column widths, and importing these on demand into an SQL Server database for testing purposes (a feature sure to bring a smile to your developers).

The following examples illustrate the batch module in action