Adapter PostgreSQL - Import, Export, Update and Delete

The PostgreSQL Adapter is used for importing into (insert), exporting from (select) and replacing records (update) of a PostgreSQL database tables and views. PostgreSQL databases up to version 15.x are supported. It is possible to access the data directly, without installing additional drivers.

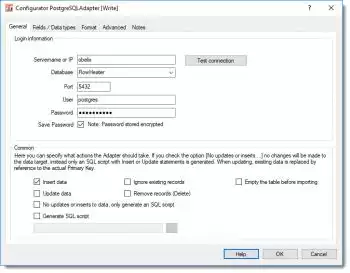

PostgreSQL configuration - General tab

Connection and authentication

Adapter PostgreSQL - database propertiesServer name or IP: This defines the host PostgreSQL Server that FlowHeater should connect to. As well as a URL specifying the host via DNS, a direct IP address can be used.

Adapter PostgreSQL - database propertiesServer name or IP: This defines the host PostgreSQL Server that FlowHeater should connect to. As well as a URL specifying the host via DNS, a direct IP address can be used.

Database: Here you enter the name of the PostgreSQL database name that the data for the import/export shall be assigned to.

Port: The address of the port that the PostgreSQL server accepts requests from. Default = 5432.

User / Password: Enter into these fields the database User and Password to enable FlowHeater to authenticate its connection to the PostgreSQL database. Important: The password is only stored if you check the "Save Password" option. If a password is saved it is stored in an encrypted form in the Definition data.

Common

Insert data: When this option is checked, SQL Insert statements are generated.

Ignore existing records: During an import and when this option is checked, records that already exist in the table are ignored.

Empty the table before importing: When this is checked you tell the PostgreSQL Adapter to empty the contents of the table prior to running the import, effectively deleting all existing rows.

Update data: When this option is checked, SQL Update statements are generated. Note: If both the Insert and Update options are checked, the PostgreSQL Adapter checks whether an SQL Update or Insert should be generated in each instance, by reference to the PrimaryKey. Tip: If you are certain there is only data to insert then avoid checking the Update option, as this will make the process significantly faster.

Remove records (Delete): This will attempt to delete existing records by reference to the fields of the index “Primary Key”. Note: This option cannot be used together with the INSERT or UPDATE options.

No updates or inserts to data, only generate an SQL script: When this option is checked, it signals the PostgreSQL Adapter to make no inline changes to the database, but instead to store an SQL script with Insert and/or Update statements. This is useful for testing during development and for subsequent application to the database. If this option is checked you should also check the option below and enter the filename that the SQL statements are to be stored in.

Generate SQL script: This option instructs the PostgreSQL Adapter to store the change statements (Insert, Update) as an SQL script file with the specified name and path.

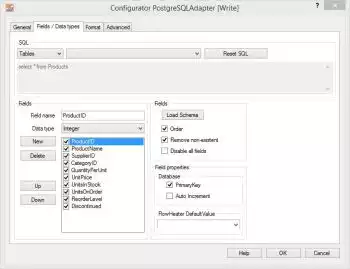

Fields / Data types tab

Adapter PostgreSQL - fields and data typesSQL: data available varies according to the side of the Adapter in use:

Adapter PostgreSQL - fields and data typesSQL: data available varies according to the side of the Adapter in use:

On the READ side: here you can choose from Tables and Views.

On the WRITE side: only Tables are available.

On the READ side you also have the possibility to enter complex SQL statements in the text field. Table joins must be defined by hand. In the second combo box the tables and views are listed that are available in the specified PostgreSQL database.

Fields: When you click the Load Schema button, information is retrieved from the database schema (field names, field sizes, data types, primary key, etc.) for the SQL statement above. The information about the fields is then loaded into the field list to the left of this button.

Note: The fields in the field list can be ordered in any sequence required. Fields that are not required can either be temporarily disabled here (tick removed) or simply deleted.

Processing of PostgreSQL array fields: The PostgreSQL Adapter can only process arrays (at present) in conjunction with the STRING FlowHeater data type. The whole field/array contents is always read or replaced. The notation conforms to the general PostgreSQL array syntax, e.g. {"one", "two", "three"} or {1, 2, 3}. You will find some examples here on handling PSQL array fields.

Field properties: How the properties of Primary Key and Auto Increment (Serial) for the currently highlighted field are to be interpreted are adjusted. This information is only required on the WRITE side. No changes are needed here generally, since the correct information is usually obtained directly from the schema.

A PrimaryKey field is used in an Update to identify record that possibly exists.

Auto Increment fields are neither assigned nor amended in Insert/Update statements.

Warning If you make changes here it can result in more than one record being updated with an Update!

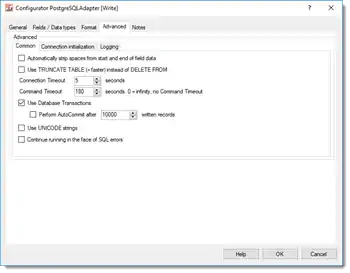

Advanced tab

General

Adapter PostgreSQL - extented propertiesAutomatically strip spaces from the start and end of content: If you check this option an auto trim of whitespace characters will be carried out on STRING data types. This means that any spaces, tabs and line break characters are automatically removed from the leading and trailing parts of strings.

Adapter PostgreSQL - extented propertiesAutomatically strip spaces from the start and end of content: If you check this option an auto trim of whitespace characters will be carried out on STRING data types. This means that any spaces, tabs and line break characters are automatically removed from the leading and trailing parts of strings.

Use TRUNCATE TABLE (=quicker) instead of DELETE FROM: When the option “Empty the table before importing” under the General tab is checked “TRUNCATE TABLE” instead of “DELETE FROM” (=default) is used to empty the table content.

Connection timeout: Timeout in seconds while waiting for connection to be established. If no connection has been made after this period, then the import/export run is aborted.

Command timeout: Timeout in seconds while waiting for an SQL command to complete. By entering a zero value here, effectively disables the option altogether. In that case SQL commands will never timeout and are awaited endlessly. This option makes sense when you select massive data volumes from a database on the READ side and the PostgreSQL database takes a long time to prepare its result.

Use database transactions: This allows you to control how data is imported. According to the default settings PostgreSQL Adapter uses a single “large” transaction to secure the entire import process. When importing extremely large volumes you may have to adapt transactional behavior to your needs using the “Run AutoCommit after writing every X records”.

Use UNICODE strings: When checked this option will force output of a prefix to the initial apostrophe. e.g. N’import value’ instead of ‘import value’.

Continue running in the face of SQL errors: This option allows you to instruct FlowHeater not to abort a run when it encounters an PostgreSQL database error. Warning: This option should only be used in exceptional circumstances, because database inconsistencies could result.

Connection initialization

User-defined SQL instructions for initializing the connection: You can place any user-defined SQL commands here, which will be used for initializing the connection after a connection to PostgreSQL Server has been established.

Adapter settings in the Format tab

PostgreSql Adapter Examples